Published on LinkedIn and amitabhapte.com on 12th Apr 2026

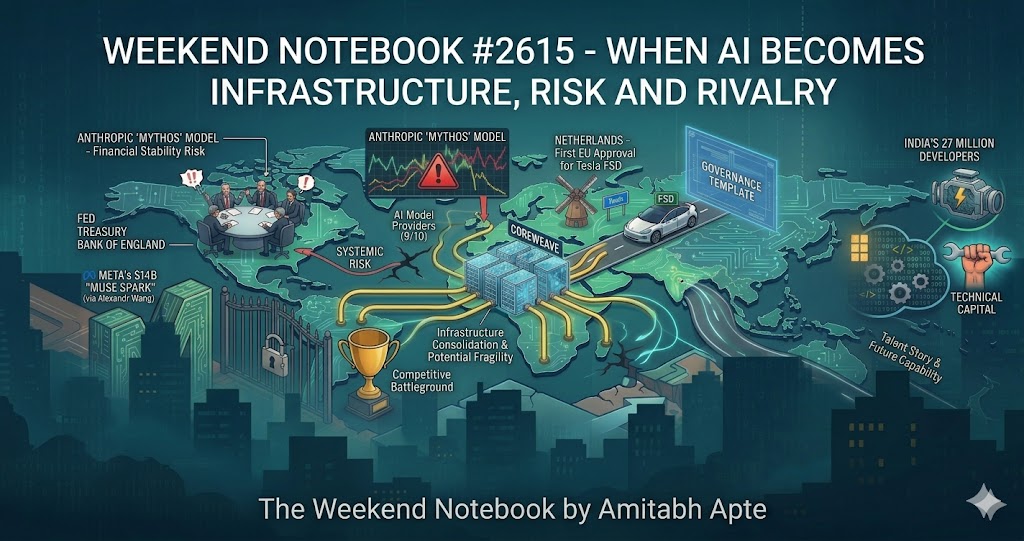

This was a week where AI showed up as an infrastructure bet, systemic risk, competitive battleground, and talent story, all at once. Many stories. One consistent thread: the foundational layers of the AI economy are being built and contested simultaneously, and the institutions designed for a slower world are catching up in real time.

The Anthropic Week: Revenue, Risk, and Rivalry

Three distinct signals from one company. First, the commercial: Anthropic’s annualised revenue crossed $30 billion, up from $9 billion just four months ago. CoreWeave sealed a multi-year infrastructure deal to power Claude workloads, days after a $21 billion commitment from Meta. Nine of the ten leading AI model providers now run on CoreWeave’s platform. Infrastructure is consolidating fast.

Second, the risk signal. Anthropic introduced Mythos Preview, a model so capable at finding and exploiting software vulnerabilities that the company chose not to release it publicly. Under Project Glasswing, access is limited to Amazon, Apple, Google, Microsoft, JPMorgan, and around 40 critical infrastructure organisations. The model has already identified vulnerabilities across every major operating system and browser, including a 27-year-old flaw in OpenBSD. Treasury officials and the Federal Reserve convened an emergency meeting with Wall Street’s senior bank CEOs. The Bank of England placed Mythos on the agenda of its Cross-Market Operational Resilience Group, alongside the FCA and the National Cyber Security Centre. Canada convened its own session the same week.

Third, the investor story. OpenAI’s secondary market shares have become difficult to sell. Around $600 million of stock found very few buyers on the secondary market. Meanwhile, demand for Anthropic shares is described as almost insatiable, with $2 billion in declared buy interest and almost no sellers. OpenAI responded with an investor memo characterising Anthropic as compute-constrained. The defensiveness itself is the signal.

| My PoV: Mythos is the clearest signal yet that AI safety is an operational risk category, not a philosophical one. For technology leaders, the question is not whether your organisation uses Anthropic products. It is whether your security posture has been updated for an era where AI can identify and weaponise software vulnerabilities at machine speed. On the investor story: the AI platform you build on today is not easily changed. Governance clarity and consistent product performance now matter as much as benchmark scores. |

New Entrants, New Approvals

Meta’s $14.3 billion bet on Alexandr Wang delivered its first output this week. Muse Spark, the first model from Meta Superintelligence Labs, is a natively multimodal reasoning model rebuilt from the ground up over nine months. It is competitive with frontier models on several benchmarks, though not a leader across the board. More significant than the model is the strategy: Muse Spark launched as a closed, proprietary product. Meta, which built its AI identity on open-source Llama, has quietly changed its approach. With capital expenditure planned at $115 to $135 billion in 2026, nearly double last year, and three billion daily users as a distribution surface, Meta is no longer treating AI as an experiment.

Separately, the Netherlands became the first EU country to formally approve Tesla’s Full Self-Driving Supervised system, after 18 months of testing covering 1.6 million kilometres on European roads. The system is not autonomous: the driver remains legally responsible and must be ready to intervene. But the approval, under EU mutual recognition rules, opens a pathway to continent-wide rollout by mid-2026. It is the first time a physical AI system of this complexity has passed rigorous European regulatory scrutiny, and the precedent will matter well beyond vehicles.

| My PoV: Meta’s shift from open to closed signals that distribution advantage, not model openness, is where the competitive moat is now being built. For enterprise leaders, the Tesla approval matters less as a driving story and more as a governance template. Physical AI systems require documented safety evidence, long evaluation windows, and ongoing reporting obligations. Build that infrastructure now, before the regulator requests it. |

India’s Technical Capital Comes of Age

Two data points deserve to be read together. GitHub reported that India now has 27 million developers on its platform, 15 percent of the global total, with more than two million new joiners in 2026 alone, more than any other country. India is the world’s second largest contributor to open-source AI projects, with over 7.5 million contributions on GitHub. At the same time, TCS posted Q4 results showing $12 billion in contract value for the quarter, $40.7 billion for the year, and annualised AI revenue of $2.3 billion. Its HyperVault data centre business, targeting 1 gigawatt of capacity, has moved into commercial structuring with hyperscalers and frontier AI companies. The positioning is explicit: infrastructure to intelligence, end to end.

| My PoV: India is simultaneously facing the erosion of traditional IT outsourcing as AI automates entry-level tasks, and building the technical and infrastructure base to compete in the next generation of AI deployment. A country producing 27 million GitHub developers and the world’s second largest open-source AI contributor base is not a back office. It is a source of technical capital at a scale few geographies can match. Enterprise talent strategies that are not designed to work with that pipeline are working around it at significant cost. |

My Takeaway This Weekend

The model layer of AI is commoditising quickly. The infrastructure layer, physical, computational, regulatory, and human, is not. The companies and countries securing advantaged positions in those foundational layers will shape the AI decade. The ones still treating AI as a product decision will find themselves working within a landscape that others have already built.

The Mythos story, the CoreWeave deals, the Tesla approval, India’s developer numbers, Meta’s infrastructure bet: none are separate stories. They are all evidence of the same transition. Intelligence is no longer arriving as a feature. It is arriving as a structural condition. The leadership question is no longer whether to engage. It is whether your organisation is building on the right foundations before the terrain gets harder to move on.